The traditional image of a professor hunched over a stack of papers with a red pen is fading fast. In 2026, from London to New York, your next essay is more likely to be read by an algorithm than a human being. Universities are increasingly adopting Automated Essay Scoring (AES) and AI-driven grading systems to manage massive workloads and provide “instant” feedback. While this promises efficiency, a growing concern is quietly echoing through lecture halls: Algorithmic Bias. For students, understanding how these digital graders work—and where they fail—is no longer optional; it is a vital part of modern digital literacy.

The shift toward automation isn’t just about speed; it’s about the massive data sets used to “teach” these machines how to grade. However, if the data used to train an AI contains historical prejudices or favors a specific cultural dialect, the AI will inevitably replicate those same flaws. This is where the human element remains irreplaceable. Many students seeking to understand these complex academic standards often look for Assignment Help online through platforms like myassignmenthelp, which provide the human nuance that automated systems frequently overlook. By combining technology with expert oversight, students can better navigate a landscape where a machine might misinterpret their unique voice as a technical error.

The Technical Reality: How AI “Reads” Your Work

To advocate for fairness, we must first understand the mechanics of the “Digital Grader.” An AI does not “read” for meaning in the way a human does. Instead, it uses Natural Language Processing (NLP) to convert your sentences into mathematical vectors. It looks for patterns: vocabulary diversity, sentence length, structural markers, and “semantic similarity” to a pre-graded set of high-scoring essays.

If the “training data” (the thousands of essays the AI studied to learn what an ‘A’ looks like) is biased toward a specific demographic, the AI creates a narrow definition of success. For instance, if the training set consists primarily of essays from students who attended elite private schools, the AI might subconsciously penalize “plain English” or alternative rhetorical styles that are perfectly valid but “statistically” different from its training set.

The 5 Faces of Algorithmic Bias in Education

Bias in grading isn’t just one problem; it is a collection of technical and social failures. Here is a breakdown of how these biases manifest in a 2026 classroom:

| Type of Bias | How it Works | Impact on Students |

| Linguistic Bias | Penalizes non-standard English or regional accents in written form. | ESL (English as a Second Language) students receive lower marks for “style.” |

| Socio-Economic Bias | Rewards specific vocabulary markers associated with high-income backgrounds. | Widens the achievement gap between different social classes. |

| Confirmation Bias | The AI looks for “expected” answers rather than rewarding creative or “out-of-the-box” thinking. | Discourages original research and creative problem solving. |

| Instructional Bias | The algorithm is tuned to a specific professor’s old grading habits, which may have been biased. | Inherited prejudices are automated and scaled. |

| Algorithmic Opacity | The “Black Box” problem where the logic behind a grade cannot be explained. | Students cannot appeal grades effectively because the “why” is hidden. |

The “Black Box” Problem and the Right to Explanation

One of the biggest hurdles for students today is the “Black Box” nature of grading algorithms. In many cases, even the professors don’t fully understand why an AI gave a student a ‘C’ instead of an ‘A.’ This lack of transparency makes it incredibly difficult to appeal a grade. If you don’t know the criteria the machine used to penalize you, how can you argue that the grade is unfair?

This is particularly stressful in high-stakes subjects like jurisprudence or legal studies, where a single misunderstood nuance or a missing citation format can change the entire meaning of a complex argument. Because of these complexities, many UK-based students specifically seek out law assignment help uk to ensure their work is vetted by human legal experts who understand the subtle complexities of the law that an algorithm might miss.

The “Standardization” Trap: Why Creativity is at Risk

There is a hidden danger in AI grading that goes beyond mere fairness: the death of the unique voice. When students know they are being graded by an algorithm, they often begin to “write for the machine.” This involves using specific keywords, following a rigid 5-paragraph structure, and avoiding any controversial or overly complex metaphors that might confuse the NLP processor.

This creates a “feedback loop” where the AI rewards boring, safe writing, and students respond by producing more boring, safe writing. In 2026, the value of a university degree is tied to critical thinking. If we allow algorithms to dictate the style of our thoughts, we are essentially training students to be second-rate robots rather than first-rate humans.

Moving Toward “Human-in-the-Loop” Solutions

The solution to algorithmic bias isn’t to banish technology. AI is a powerful tool for catching grammar errors and providing basic feedback. The key is a system known as Human-in-the-Loop (HITL). In this model, the AI acts as a “teaching assistant,” highlighting areas of concern or suggesting a preliminary score, but a human educator makes the final decision.

Why HITL is essential:

- Contextual Awareness: Humans understand that a student might be writing about a personal hardship that explains a shift in tone.

- Cultural Nuance: Humans can recognize “brilliance” even if it doesn’t follow a standard structural pattern.

- Accountability: You can talk to a human. You can debate a human. You cannot argue with an “error code” from a server.

How to Protect Your Academic Integrity in an AI World

As a student in 2026, you are the first generation to navigate this fully automated landscape. To ensure your grades reflect your true ability, consider the following strategies:

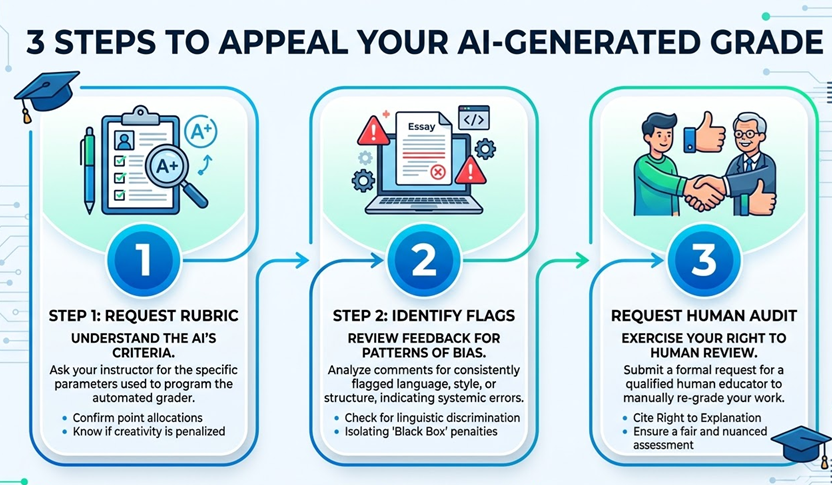

1. Demand the Rubric

Before submitting any major project, ask your instructor if an AI tool will be used for the initial screening. If the answer is yes, ask for the specific rubric or “parameters” the AI is looking for. Knowing if the machine prioritizes “vocabulary complexity” over “argumentative flow” can help you adjust your strategy without losing your voice.

2. Focus on Logical Signposting

Algorithms love structure. Use “signposts”—words like Furthermore, Conversely, Consequently, and In conclusion—to help the AI track your logic. While a human can follow a subtle transition, a machine needs these “hooks” to recognize that you are making a coherent point.

3. Request a Human Audit

If you receive a grade that feels “off,” don’t just accept it. Most universities in 2026 have policies regarding the “Right to Human Review.” If an automated system has scored you, you are often entitled to have a human professor look at the work, especially if you can point to potential linguistic or cultural algorithmic bias in the feedback.

The Ethics of Grading Equity: A Global Perspective

Algorithmic Bias fairness isn’t just a technical challenge for software engineers; it is a civil rights issue for the digital age. In a globalized world, students from every corner of the globe are competing for the same opportunities. If a student in Mumbai or Nairobi is penalized because their version of English doesn’t match a “Silicon Valley” training set, we have failed the mission of global education.

Universities have a moral obligation to audit their software. This means checking for “Demographic Parity”—ensuring that the pass/fail rates for different ethnic and social groups remain consistent regardless of whether a human or a machine is grading.

Final Thoughts: Reclaiming the Human Element

As we move further into 2026, the partnership between human intelligence and artificial intelligence will only grow tighter. Technology can help us grade faster and provide more feedback, but it should never be the final judge of a student’s potential or passion.

The goal of education is to expand the mind, not to fit it into a pre-defined mathematical box. By staying informed about how these systems work, advocating for transparency, and utilizing expert resources when the machine fails to understand your nuance, you can ensure that your hard work is recognized for its true value. Let’s use AI to support our learning, but let’s keep the “human” at the heart of the grade.

FAQs

What is algorithmic bias in academic grading?

It occurs when automated software mirrors human prejudices or statistical flaws found in its training data. This can lead to unfair scoring based on a student’s writing style, cultural dialect, or linguistic background rather than the actual quality of their ideas.

How can I tell if my essay was graded by an AI?

Most institutions now disclose the use of automated tools in their course handbooks. Clues often include receiving feedback algorithmic bias almost instantly after submission or receiving comments that focus heavily on structural patterns and vocabulary rather than the specific nuance of your argument.

Do I have the right to challenge an automated grade?

Yes. In most modern academic jurisdictions, students have a “right to explanation.” If you believe a mathematical model has misinterpreted your work or applied a algorithmic bias standard, you can request a formal manual review by a human educator.

Can AI understand creative or non-traditional writing?

Currently, most grading algorithms prioritize consistency and “standard” structures. Because they score based on probability and existing patterns, highly original or unconventional creative writing can sometimes be flagged as “low probability” or incorrect by the system.

About The Author

Jack Williams is a dedicated academic consultant and researcher with a passion for exploring the intersection of technology and modern education. Representing myassignmenthelp, he focuses on helping students navigate the complexities of the digital classroom while ensuring academic integrity and personal growth remain at the forefront of the learning experience.